This thesis developed a real-time system for detecting, classifying, and locating sound events using only audio data. A network of 16 microphones and deep learning techniques achieved 96% classification accuracy and average localization error of 1.4 meters, demonstrating that sound-based analysis can effectively replace vision in monitoring applications.

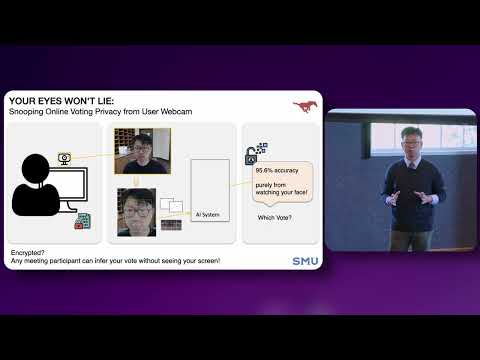

This research exposes a hidden privacy risk in online voting and video conferencing: eye movements captured by standard webcams can reveal user choices. Using AI models, voting decisions were inferred with over 95% accuracy, highlighting that digital security must address behavioral signals—not just encryption.

This research develops a framework for designing haptic technologies in virtual reality that balance immersion and practicality. By accounting for differences in body sensitivity, it introduces affordable, scalable devices—gloves, facial haptics, jackets, and floors—that enable full-body tactile feedback, bringing realistic touch into VR experiences.