This research develops explainable AI systems to detect early signals of ideological extremism and potential violence in online communications. By integrating social science and machine learning, the project produces interpretable threat assessments for prevention efforts. The framework also extends to healthcare, including rare disease detection using explainable AI models.

This neuroscience study shows that brief pre-lecture interactions significantly improve learning. Students who chatted with either a human teacher or an AI tutor before watching a video lecture performed better and showed greater brain synchrony in MRI scans. Social interaction—human or artificial—primes the brain for more effective learning.

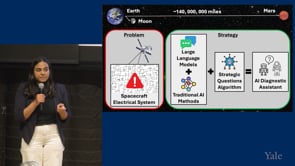

This research develops an onboard AI diagnostic assistant for space missions that can independently investigate life-critical anomalies. By learning how humans ask strategic diagnostic questions, the system combines language models and traditional AI to actively reason through unprecedented spacecraft failures when communication with Earth is delayed.